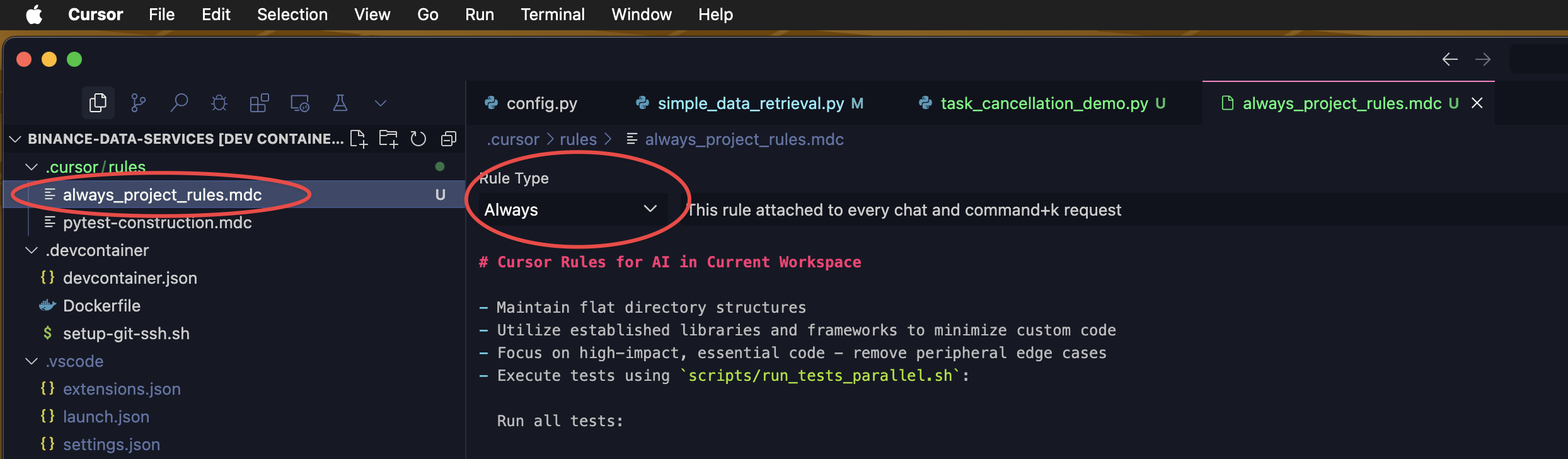

Always “ON” Cursor Rules for Data Source Manager (DSM) Project

Source: Notion | Last edited: 2025-04-09 | ID: 1d02d2dc-3ef...

# Cursor Rules for AI in Current Workspace

- Maintain flat directory structures- Utilize established libraries and frameworks to minimize custom code- Focus on high-impact, essential code - remove peripheral edge cases- Execute tests using `scripts/run_tests_parallel.sh`:

Run all tests:

```bash scripts/run_tests_parallel.shRun specific test directory:

scripts/run_tests_parallel.sh tests/cache_structureutils/logger_setup.py is used for all debug

- Resolve type hints properly instead of using type ignore comments

- Include —no-cli-pager flag in AWS CLI commands

- Implement guard clause pattern for cleaner control flow

- Use full project-relative paths for file operations

- Move or rename files and directories with git mv

- Assume

curl_cffiandhttpxis installed and available for import so no need to try importing it - Prefer

curl_cffiover httpx, requests, aiohttp, and pycurl for HTTP client implementations

Code Quality

Section titled “Code Quality”- Use pylint for Python linting (no flake8)

- Configure pylint via CLI flags rather than config files

Logging

Section titled “Logging”- Make use of

utils/logger_setup.pyas custom logging import for creating all new Python script as follows:

from utils.logger_setup import loggerPrinciples of PyTest

Section titled “Principles of PyTest”- Only focus on most low-hanging real-world user cases.

Make good use of caplog and utils/logger_setup.py for PyTest.

- Timeout should never be set at more than 5 seconds constructed in the target tested main script core or its utils business logics but never in the test codes.

- For all API data retrieval tests (e.g., Vision API), unless testing cross-boundary or date format changes, we start from the current date and search backward up to 3 days for the latest available date with downloadable zipped files. Availability checks must be handled in business scripts, not in test scripts.

- Never use any suppression or silencing techniques to avoid confronting errors or warnings.

- Moreover, it is a fact that we cannot artificially force 3rd party network produce real-world errors from LIVE network intentionally so we don’t need these kind of testing but resort to

tenacityrelated techniques. - Don’t deal with anything related to Options market data. Remove all concerns and codes related to options market.

- We trade spot and perpetual futures only but never futures with expiration date. Remove all concerns and codes related to futures with expiration dates.

- Cannot contain any business logics on its own but the purpose is to test the business logics of the target scripts

- Don’t use any

pytest.skip(). Handle errors without skipping. - Follow the principle that resources should be properly initialized and cleaned up, especially for network connections.

- Explicitly configure

asyncio_default_fixture_loop_scope = functionforpytest-asyncio. This ensures consistent event loop behavior and prevents deprecation warnings. This configuration should be managed either inpytest.inior, preferably, directly within the test execution script (e.g.,run_tests_parallel.sh) for self-contained test runs.

Problem Solving Approach

Section titled “Problem Solving Approach”On API related issues, always use CURL from terminal to find the root causes first.

- Resolve

WARNINGwith options made available for me to choose from before proceeding further. - When encountering API related issues, use terminal-based

Curlto find out more before making coding changes. - Address deprecation warnings by properly configuring the library settings in configuration files or test execution scripts, rather than suppressing them with warning filters. Prioritize explicit configuration to ensure consistent and future-proof test behavior.

No Mocking and No Sample Data

Section titled “No Mocking and No Sample Data”- Never use any sample or mock data for PyTest cases but real-world data only.

- Always use actual integration tests against real components rather than mocked interactions.

- We can accept synthetic test scenarios (e.g. for stress testing purposes) that still rely on real-world interfaces and data.

- If certain tests can’t be run due to external dependencies, properly document why with appropriate markers rather than mocking.

`# Testing Principles (PyTest Execution)

- Use

scripts/run_tests_parallel.shas the only entry point for running tests. - Do not invoke

pytestdirectly. - Avoid all config files:

pytest.ini,tox.ini,pyproject.toml. - Pass all configurations and flags explicitly via the

scripts/run_tests_parallel.shscript arguments — never rely on hidden config files. - Centralize execution. Standardize behavior. Ensure reproducibility.

- No implicit state. No config sprawl. No surprises.

- CLI arguments over config files. Script for execution control.

How to use scripts/run_tests_parallel.sh

Section titled “How to use scripts/run_tests_parallel.sh”- DO NOT run entire

scripts/run_tests_parallel.sh -vbecause the hundreds of cases output in the tests will overload the LLM context windows.

Example Usage

Section titled “Example Usage”PYTHONPATH=/workspaces/binance-data-services pytest "tests/market_types/test_vision_market_types.py::test_data_source_manager_market_types" -v --asyncio-mode=auto --no-header